📖 My current research interests are: time series forecasting, medical image segmentation and classification, control theory, model order reduction.

If you are seeking any potential academic collaboration, welcome to contact me by email:

📫: xiong.sijie.630@s.kyushu-u.ac.jp

I have been pursuing my Ph.D. degree at Kyushu University, Japan as of April 2024 following Prof. Atsushi Shimada (島田 敬士 シマダ アツシ ), and have a joint background in Control & Optimization, Control Theory, Mechanics, Finance, and Deep Learning.

I graduated from the Department of Electrical and Electronic Engineering at Imperial College London and obtained a Master degree in Control Systems with Distinction in 2021.

Prior to that, I received my Bachelor degree in Automation from the College of Energy and Electrical Engineering, Hohai University in 2020.

I also obtained an honor Bachelor degree in Applied Accounting from Oxford Brookes University jointly with ACCA.

Apart from degrees, I have been a member of the following associations on business:

- 💰 CQF, since 2022 (with Distinction).

- ⛰️ FRM, since 2021.

- 📒 ACCA, since 2022.

- ♻️ CFA Sustainable Investing Certificate, since 2024.

I have published some papers in excellent journals and conferences, such as ASOC, CAI, EMBC, SMC, ICASSP, IEEE CYBCONF, IEEE PRMVAI, ICML. You can find my publications on Google Scholar.

🔥 News

- The show must go on.

- 2026.07: 🎉 KUMA was accepted by ICML 2026.

- 2026.05: 🎉 KPMG (Oral) was accepted by IEEE ICASSP 2026.

- 2026.05: 🎉 GlucoMixer (Poster) was accepted by IEEE ICASSP 2026.

- 2026.05: 🎉 GluConv was accepted by IEEE PRMVAI 2026. (Invited as Workshop 21 Chair)

- 2026.04: 🎉 GluPIDHW was accepted by IEEE CYBCONF 2026. (Invited as Session Chair)

- 2025.10: 🎉 Attention Mamba was accepted by IEEE SMC 2025 and online.

- 2025.09: 🎉 FairyTaleQA Chinese was accepted by APSCE ICLEA 2025 and online, winning The Best Poster Award.

- 2025.08: 🎉 CME-Mamba was accepted by ASOC and online.

- 2025.07: 🎉 GluTANN was accepted by CAI 2025 and online.

📝 Publications

🩸 Diabetes Glucose Monitoring

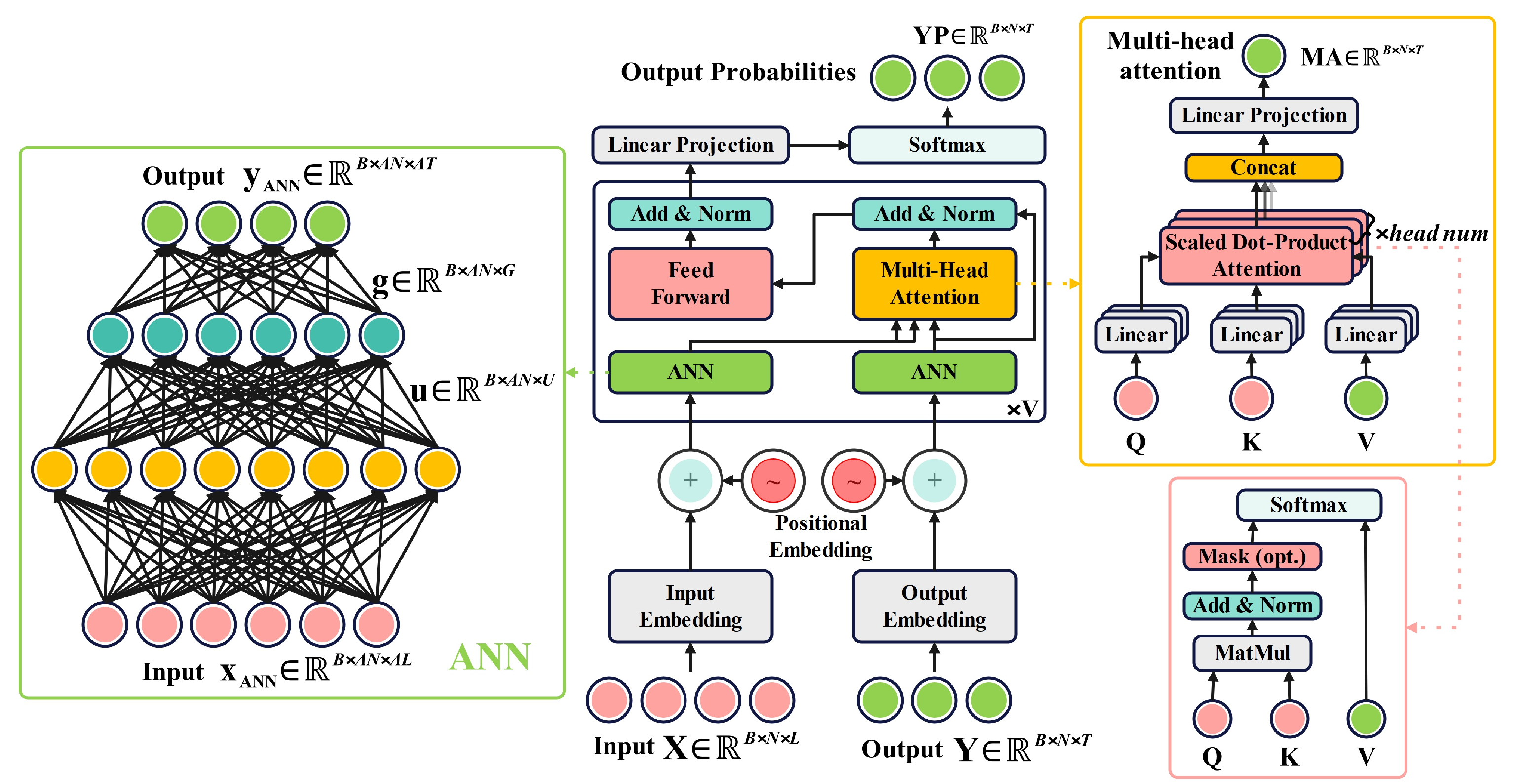

GluTANN: Transformer-Based Continuous Glucose Monitoring Model with ANN Attention

Sijie Xiong, Youhao Xu, Cheng Tang, Jianing Wang, Shuqing Liu, Atsushi Shimada

- Abstract: In this work, we propose an innovative model based on Transformer architecture, GluTANN, with specially designed ANNs acting as self-attentions and paired correlations preserved by the encoder-decoder structure. Extensive experiments across five recognized datasets demonstrate that GluTANN has great competitiveness in reducing uncertainty while preserving satisfying accuracy, providing a feasible approach to effective glucose management and diabetes medical decisions.

- Core Idea: Reduce redundant tokens of Transformer-based models as much as we can.

- Domain: Time Series Forecasting, Diabetes, Glucose Monitoring

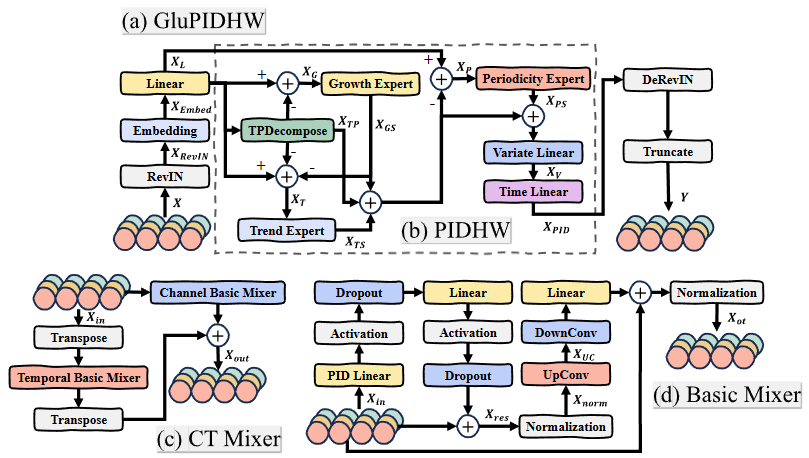

GluPIDHW: A PID-Holt-Winters Model for Personalized Glucose Monitoring

Youhao Xu+, Sijie Xiong+, Tao Sun, Jianing Wang, Cheng Tang, Atsushi Shimada

- Abstract: The increasing worldwide incidence of diabetes has created a pressing demand for accurate and reliable glucose monitoring. Nevertheless, conventional machine learning methods exhibit limitations in trustworthiness and personalization, while deep learning methods with excellent trustworthiness underperform in accuracy and responsiveness. To realize an equilibrium, we propose an efficient model named GluPIDHW. Built upon an optimized Holt–Winters architecture augmented by a PID controller, GluPIDHW achieves improved accuracy and enhanced responsiveness. Extensive experiments conducted on five recognized datasets and out-of-distribution tasks demonstrate the superiority performance of GluPIDHW over excellent counterparts. This leadership is further validated by a Friedman testing and collectively, GluPIDHW offers a promising paradigm for continuous glucose monitoring and data-driven medical diagnose.

- Core Idea: Analogy from PID controller to Holt-Winters model.

- Domain: Time Series Forecasting, Diabetes, Glucose Monitoring

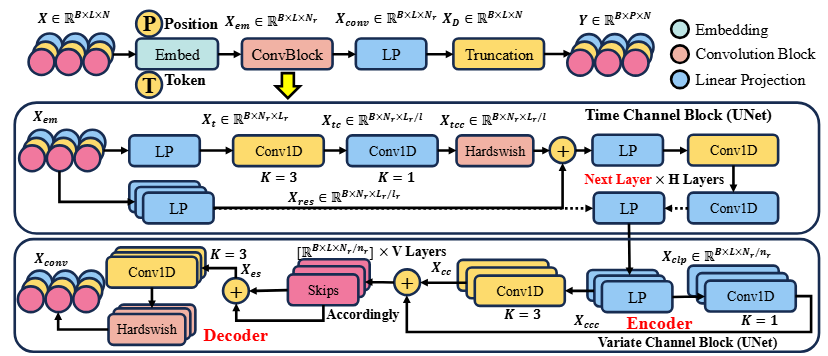

GluConv: Toward Trustworthy Convolutional Modeling for Sequential Signal Forecasting

Sijie Xiong, Haiqiao Liu, Yinlong Hu, Atsushi Shimada

-

Abstract: Sequential signal forecasting is a fundamental challenge in machine learning, requiring models that simultaneously achieve high accuracy, responsiveness, trustworthiness. Existing shallow machine learning approaches provide fast inference but frequently lack trustworthiness in long-term supervision, while deep learning methods with improved reliability typically suffer from reduced responsiveness and degraded accuracy performance. To address these limitations, GluConv, an efficient convolution-based forecasting framework, is proposed. Accuracy, trustworthiness, and responsiveness are well balanced and preserved. GluConv nearly builds upon multi-scale convolutional units to capture both variate correlation and temporal dependency in sequential signals. Extensive experiments on continuous glucose monitoring benchmarks demonstrate that GluConv consistently outperforms excellent machine/deep learning baselines on reliability and responsiveness, while maintaining competitive accuracy. These properties make GluConv a Convincing, Convolutional, and Convenient scheme for trustworthy forecasting.

-

Core Idea: Combine U-Net and Convolutions.

-

Domain: Time Series Forecasting, Diabetes, Glucose Monitoring

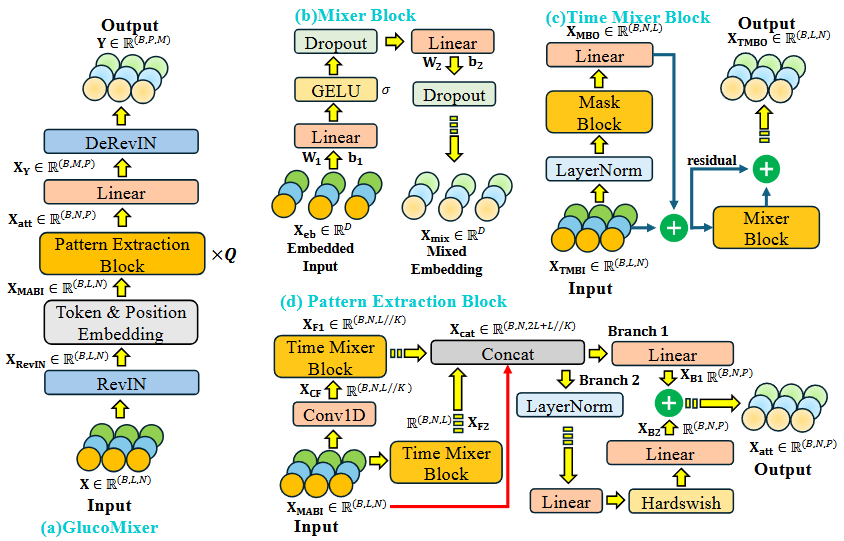

GlucoMixer: An Efficient Glucose Monitoring Model with Mixers

Sijie Xiong, Jianing Wang, Tao Sun, Cheng Tang, Fumiya Okubo, Atsushi Shimada

- Abstract: With the global prevalence in diabetes and scarcity of definitive clinic schemes, the need for effective and reliable glucose monitoring has become imperative. Due to excellent responsiveness and precision, machine learning (ML) models have been widely employed by continuous glucose monitors (CGMs). However, recent studies have raised concerns that ML-based models often exhibit limited trustworthiness, injecting uncertainty into medical practices. In contrast, deep learning (DL) models are recognized as more trustworthy, but they are struggling with accuracy. To strike a balance between accuracy and trustworthiness, we propose GlucoMixer, an Encoder-only architecture built predominantly with Mixer modules. To prevent future information leakage, we design a Mask Block that employs a lightweight triangular masking scheme. Given the presence of two diabetes types, we employ a convolutional layer to distinguish relevant information and take two Time Mixer Blocks to handle distinct patterns accordingly. Comprehensive In-Distribution (ID) and Out-of-Distribution (OD) evaluations across five benchmark datasets highlight GlucoMixer’s superior performance in high predictive accuracy alongside excellent trustworthiness. According to the test ranking, GlucoMixer is more balanced across multiple evaluation metrics, demonstrating its potential as a practical spur for reliable glucose management and medical decisions.

- Core Idea: Combine linear projections and mask techniques to achieve a lightweight model.

- Domain: Time Series Forecasting, Diabetes, Glucose Monitoring

🚥🚦 Control & Time Series Forecasting

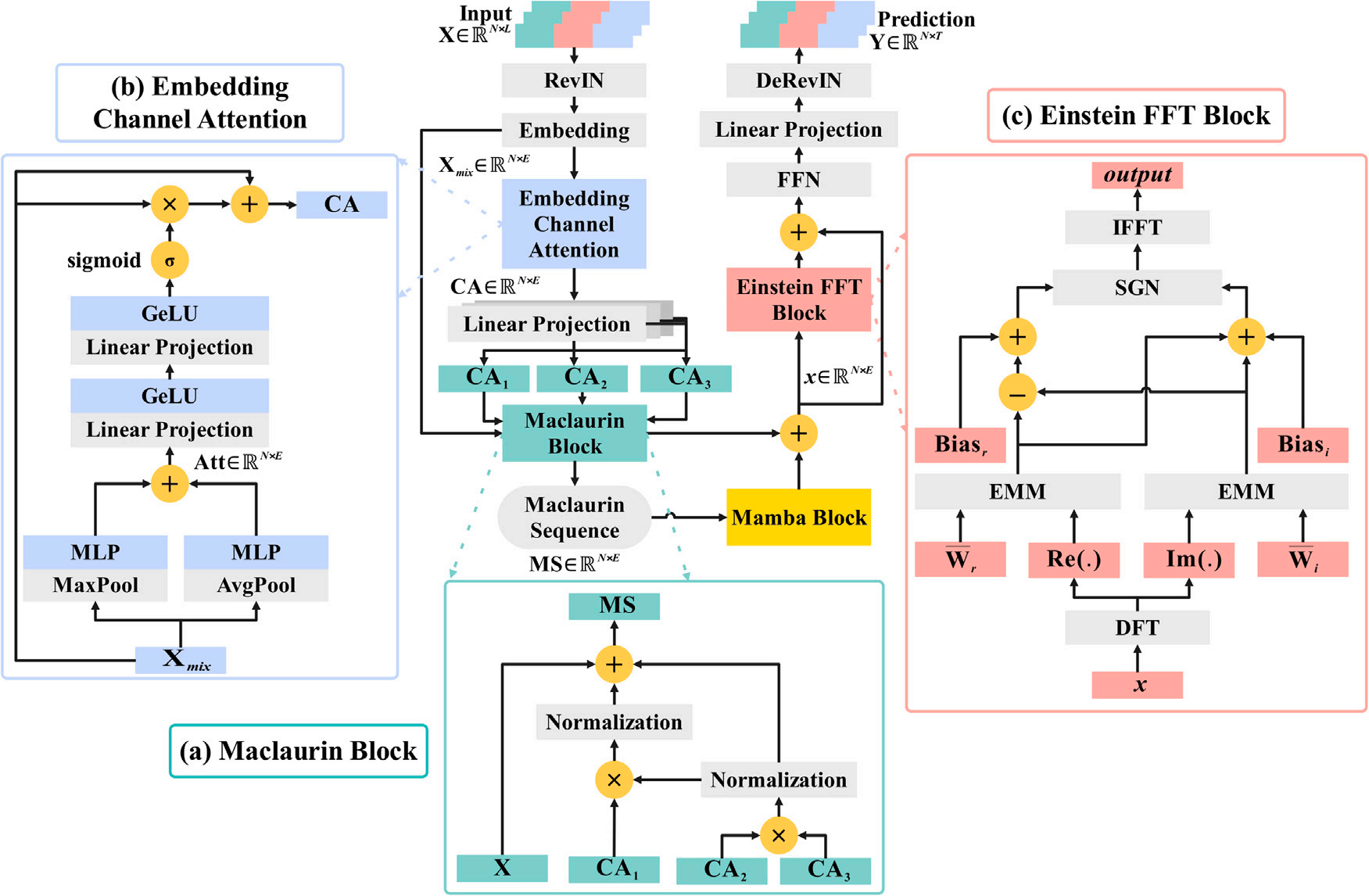

Enhancing Nonlinear Dependencies of Mamba via Negative Feedback for Time Series Forecasting

Sijie Xiong, Cheng Tang, Yuanyuan Zhang, Haoling Xiong, Youhao Xu, Atsushi Shimada

- Abstract: In this work, we are inspired by the curvature from financial domains and control systems, proposing CME-Mamba. The effectiveness, stability, robustness, etc., are discussed. Extensive experiments demonstrate that CME-Mamba grows excellent to uncover complex paradigms and predict future states in various domains, especially improving the performance for periodic and high-variate situations.

- Core Idea: Leverage negative feedback loop to enhance non-linearity for TSF models.

- Domain: Time Series Forecasting, Control

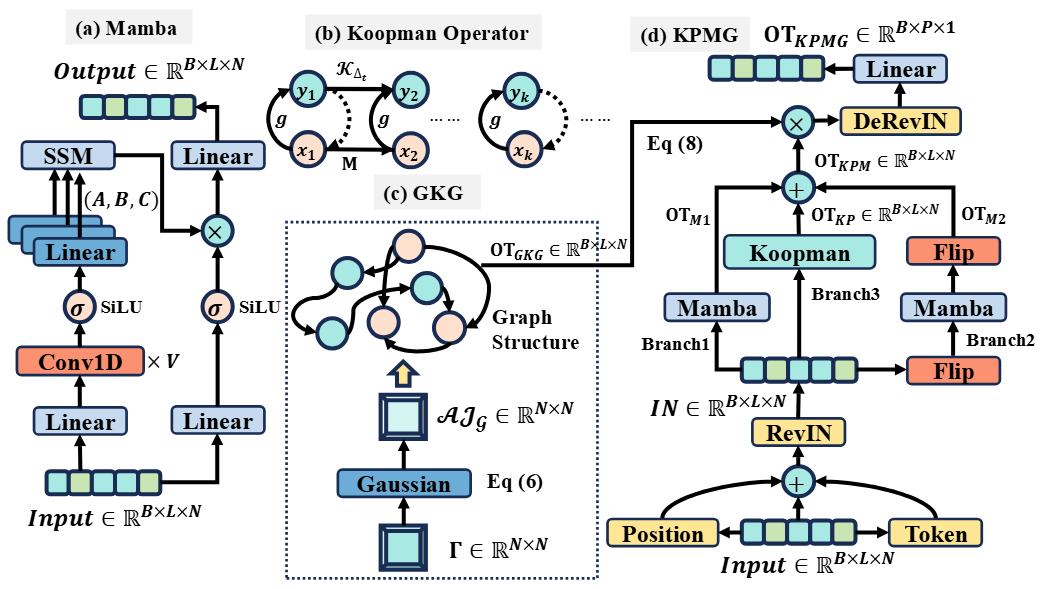

KPMG: A Graphical Koopman-Mamba Approach for Financial Markets

Sijie Xiong, Cheng Tang, Fumiya Okubo, Tsubasa Minematsu, Yinlong Hu, Atsushi Shimada

- Abstract: Financial markets reflected by indices are substantial components of global economy. While existing models have achieved significant forecasting performance, they struggle to balance temporal and variate dependencies, which results in a trade-off between predictive accuracy and trustworthiness. Moreover, current models suffer from disturbances and impurities embedded in the financial data. To address these challenges, we propose KPMG, an efficient architecture that integrates the strengths of Mamba and Graph Neural Networks. With crucial features emphasized by Koopman operator and both temporal and variate dependencies mixed up in KPMG, accuracy and trustworthiness are significantly advanced. Extensive experiments on nine leading benchmarks across three index datasets demonstrate that KPMG has superiority over counterparts in prediction performance, while remaining acceptable computational complexity. Ablation studies further confirm the effectiveness of each designed module. The Friedman test consolidates the superiority of KPMG over counterparts.

- Core Idea: Leverage Mamba, graphical neural network (GNN) to predict financial markets.

- Domain: Time Series Forecasting, Finance, Index Forecasting

🏫 Education

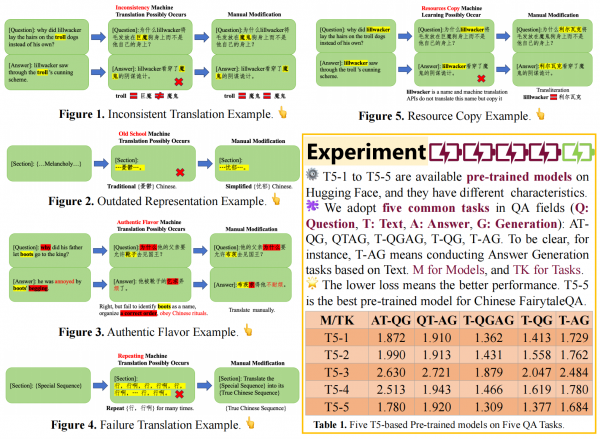

Fine-tuned T5 Models on FairytaleQA Chinese Dataset

Sijie Xiong, Haoling Xiong, Tao Sun, Haiqiao Liu, Fumiya Okubo, Cheng Tang, Atsushi Shimada

- Abstract: Question Answering (QA) is very important for comprehension learning and FairytaleQA is widely employed in this domain. However, rare versions in a limited number of alphabet languages restricts its application and current translators have five fatal errors. In our study, we manually translate FairytaleQA into Chinese and test its effectiveness via five fine-tuned T5 models.

- Core Idea: Extend current QA datasets on education for pre-trained models.

- Domain: Question-Answering, Education, Pre-trained Models

Others

- Jianing Wang, Wei Dai, Tianyun Li, Xiang Zhu, Weiye Xu, Sijie Xiong, “Optimization of Nonlinear Energy Sinks for Broadband Vibration Suppression in Marine Propulsion Systems”, Journal of Vibration and Control (JVC). Jan. 2026.

- Yuanyuan Zhang, Haocheng Zhao, Sijie Xiong, Rui Yang, Eng Gee Lim, Yutao Yue, “From High-SNR Radar Signal to ECG: A Transfer Learning Model with Cardio-Focusing Algorithm for Scenarios with Limited Data”, IEEE Transactions on Mobile Computing (TMC). Oct. 2025.

- Tao Sun, Sijie Xiong, Cheng Tang, Haichuan Yang, Fumiya Okubo, Atsushi Shimada, “Decomposed Seasonal-Trend Network with Rotary Attention for Time Series Forecasting”, in 2026 IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP). May 2026.

- Yuanyuan Zhang, Sijie Xiong, Rui Yang, Eng Gee Lim, Yutao Yue, “Recover from Horcrux: A Spectrogram Augmentation Method for Cardiac Feature Monitoring from Radar Signal Components”, in 2025 47th Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC), IEEE. Nov. 2025.

- Haiqiao Liu, Tsubasa Minematsu, Chengjiu Yin, Sijie Xiong, Atsushi Shimada, “Exploring the Relationship Between System Operation Behaviors and Learning Achievement in Agricultural Education”, in 2025 33rd International Conference on Computers in Education (ICCE), IEEE. Oct. 2025.

- Tao Sun, Li Chen, Sijie Xiong, Cheng Tang, Gen Li, Atsushi Shimada, “FERL-YOLO: Facial Expression Recognition Model of Learners”, in 2025 International Conference on Learning Evidence and Analytics (ICLEA), APSCE. Sep. 2025.

- Shuqing Liu, Li Chen, Sijie Xiong, Haiqiao Liu, Cheng Tang, Atsushi Shimada, “DiaRoBERTa: A Multi-Party Dialogue Model for Multi-Skill Recognition in Classroom Collaborative Problem Solving Discussions”, in 2025 International Conference on Learning Evidence and Analytics (ICLEA), APSCE. Sep. 2025.

- Haiqiao Liu, Tsubasa Minematsu, Chengjiu Yin, Shuqing Liu, Sijie Xiong, Atsushi Shimada, “Integrating Scaffolding Strategies with Environmental Monitoring Systems to Enhance Learning and Practical Skills in Agricultural Education”, in 2025 International Conference on Learning Evidence and Analytics (ICLEA), APSCE. Sep. 2025.

🏅 Honors and Awards

-

May 2026 Worshop 21 Chair of IEEE PRMVAI 2026 hosted by Hohai University.

-

Apr. 2026 Session Chair of IEEE CYBCONF 2026 hosted by Nanjing University.

- Sep. 2025 The Best Poster Award of 2025 International Conference on Learning Evidence and Analytics (ICLEA).

-

Oct. 2020 Honorary Title of Learning Model Student (2019-2020), Hohai University. (1st Student in the 4th Academic Year).

-

Oct. 2020 Academic Excellence Scholarship (2019-2020), Hohai University.

-

Oct. 2020 Spiritual Civilization Scholarship (2019-2020), Hohai University.

- Oct. 2019 Academic Excellence Scholarship (2018-2019), Hohai University.

📖 Educations

-

2024.04 - 2026.09, SGU-Ph.D., Kyushu University, Fukuoka, Japan. (Early Graduation)

-

2020.10 - 2021.10, M.Sc., Imperial College London, London, UK.

-

2020.09 - 2021.09, B.Sc. (Hons), Oxford Brookes University, Oxford, UK.

-

2019.08 - 2019.08, Summer School: World Challenge and Innovation Program, Imperial College London, London, UK.

-

2016.09 - 2020.06, B.Eng., Hohai University, Nanjing, China.

💬 Employment

- 2021.12 - 2024.04, Senior Software Engineer, HUAWEI Technologies Co., Ltd., Shanghai.

💻 Internships

- 2021.09 - 2021.12, Portfolio Investment Strategy Intern, China Futures Co., Ltd., Shanghai.